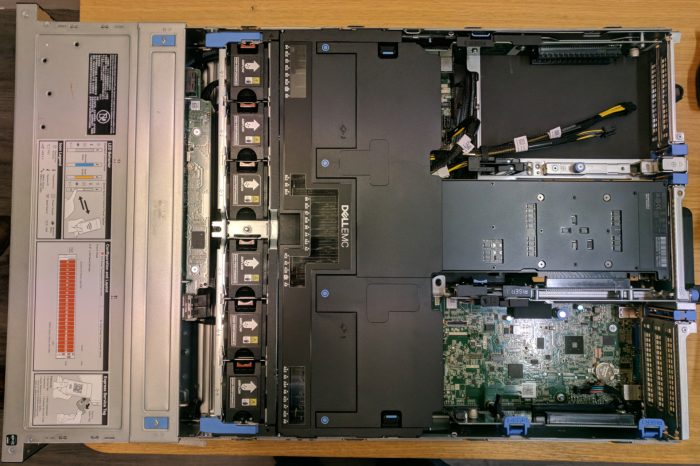

Recently at work we bought a new Dell PowerEdge R7425 server for our HPC cluster. These are some of the specifications:

- 2 AMD EPYC 7351 16-Core Processors

- 128 GB RAM (16 DIMMs of 8 GB)

- Tesla V100 GPU

Our FAI configuration automatically installed Debian stretch on it without any problem. All hardware was recognized and working. The installation of the basic operating system took less than 20 minutes. FAI also sets up Puppet on the machine. After booting the system, Puppet continues setting up the system: installing all needed software, setting up the Slurm daemon (part of the job scheduler), mounting the NFS4 shared directories, etc. Everything together, the system was automatically installed and configured in less than 1 hour.

Linux kernel upgrade

Even though the Linux 4.9 kernel of Debian Stretch works with the hardware, there are still some reasons to update to a newer kernel. Only in more recent kernel versions, the k10temp kernel module is capable of reading out the CPU temperature sensors. We also had problems with fscache (used for NFS4 caching) with the 4.9 kernel in the past, which are fixed in a newer kernel. Furthermore there have been many other performance optimizations which could be interesting for HPC.

You can find a more recent kernel in Debian’s Backports repository. At the time of writing it is a 4.18 based kernel. However, I decided to build my own 4.19 based kernel.

In order to build a Debian kernel package, you will need to have the package kernel-package installed. Download the sources of the Linux kernel you want to build, and configure it (using make menuconfig or any method you prefer). Then build your kernel using this command:

$ make -j 32 deb-pkg

Replace 32 by the number of parallel jobs you want to run; the number of CPU cores you have is a good amount. You can also add the LOCALVERSION and KDEB_PKGVERSION variables to set a custom name and version number. See the Debian handbook for a more complete howto. When the build is finished successfully, you can install the linux-image and linux-headers package using dpkg.

We mentioned temperature monitoring support with the k10temp driver in newer kernels. If you want to check the temperatures of all NUMA nodes on your CPUs, use this command:

$ cat /sys/bus/pci/drivers/k10temp/*/hwmon/hwmon*/temp1_input

Divide the value by 1000 to get the temperature in degrees Celsius. Of course you can also use the sensors command of the lm-sensors package.

Kernel settings

VM dirty values

On systems with lots of RAM, you will encounter problems because the default values of vm.dirty_ratio and vm.dirty_background_ratio are too high. This can cause stalls when all dirty data in the cache is being flushed to disk or to the NFS server.

You can read more information and a solution in SuSE’s knowledge base. On Debian, you can create a file /etc/sysctl.d/dirty.conf with this content:

vm.dirty_bytes = 629145600

vm.dirty_background_bytes = 314572800

Run

# systctl -p /etc/sysctl.d/dirty.conf

to make the settings take effect immediately.

Kernel parameters

In /etc/default/grub, I added the options

transparant_hugepage=always cgroup_enable=memory

to the GRUB_CMDLINE_LINUX variable.

Transparant hugepages can improve performance in some cases. However, it can have a very negative impact on some specific workloads too. Applications like Redis, MongoDB and Oracle DB recommend not enabling transparant hugepages by default. So make sure that it’s worthwhile for your workload before adding this option.

Memory cgroups are used by Slurm to prevent jobs using more memory than what they reserved. Run

# update-grub

to make sure the changes will take effect at the next boot.

I/O scheduler

If you’re using a configuration based on recent Debian’s kernel configuration, you will likely be using the Multi-Queue Block IO Queueing Mechanism with the mq-deadline scheduler as default. This is great for SSDs (especially NVME based ones), but might not be ideal for rotational hard drives. You can use the BFQ scheduler as an alternative on such drives. Be sure to test this properly tough, because with Linux 4.19 I experienced some stability problems which seemed to be related to BFQ. I’ll be reviewing this scheduler again for 4.20 or 4.21.

First if BFQ is built as a module (wich is the case in Debian’s kernel config), you will need to load the module. Create a file /etc/modules-load.d/bfq.conf with contents

bfq

Then to use this scheduler by default for all rotational drives, create the file /etc/udev/rules.d/60-io-scheduler.rules with these contents:

# set scheduler for non-rotating disks

ACTION=="add|change", KERNEL=="sd[a-z]|mmcblk[0-9]*|nvme[0-9]*", ATTR{queue/rotational}=="0", ATTR{queue/scheduler}="mq-deadline"

# set scheduler for rotating disks

ACTION=="add|change", KERNEL=="sd[a-z]", ATTR{queue/rotational}=="1", ATTR{queue/scheduler}="bfq"

Run

# update-initramfs -u

to rebuild the initramfs so it includes this udev rule and it will be loaded at the next boot sequence.

The Arch wiki has more information on I/O schedulers.

CPU Microcode update

We want the latest microcode for the CPU to be loaded. This is needed to mitigate the Spectre vulnerabilities. Install the amd64-microcode package from stretch-backports.

Packages with AMD ZEN support

hwloc

hwloc is a utility which reads out the NUMA topology of your system. It is used by the Slurm workload manager to to bind tasks to certain cores.

The version of hwloc in Stretch (1.11.5) does not have support for the AMD ZEN architecture. However, hwloc 1.11.12 is available in stretch-backports, and this version does have AMD ZEN support. So make sure you have the packages hwloc libhwloc5 libhwloc-dev libhwloc-plugins installed from stretch-backports.

BLAS libraries

There is no BLAS library in Debian Stretch which supports AMD ZEN architecture. Unfortunately, at the moment of writing there is is also no good BLAS implementation for ZEN available in stretch-backports. This will likely change in the near future though, as BLIS has now entered Debian Unstable and will likely be backported too in the stretch-backports repository.

NVIDIA drivers and CUDA libraries

NVIDIA drivers

I installed the NVIDIA drivers an CUDA libraries from the tarballs downloaded from the NVIDIA website because at the time of writing all packages available in the Debian repositories are outdated.

First make sure you have the linux-headers package installed which corresponds with the linux-image kernel package you are running. We will be using DKMS to rebuild the driver automatically whenever we install a new kernel, so also make sure you have the dkms package installed.

Download the NVIDIA driver for your GPU from the NVIDIA website. Remove the nouveau driver with the

# rmmod nouveau

command. And create a file /etc/modules-load.d/blacklist-nouveau.conf with these contents:

blacklist nouveau

and rebuild the initramfs by running

# update-initramfs -u

This will ensure the nouveau module will not be loaded automatically.

Now install the driver, by using a command similar to this:

# NVIDIA-Linux-x86_64-410.79.run -s --dkms

This will do a silent installation, integrating the driver with DKMS so it will get built automatically every time you install a new linux-image together with its corresponding linux-headers package.

To make sure that the necessary device files in /dev exist after rebooting this system, I put this script /usr/local/sbin/load-nvidia:

#!/bin/sh

/sbin/modprobe nvidia

if [ "$?" -eq 0 ]; then

# Count the number of NVIDIA controllers found.

NVDEVS=`lspci | grep -i NVIDIA`

N3D=`echo "$NVDEVS" | grep "3D controller" | wc -l`

NVGA=`echo "$NVDEVS" | grep "VGA compatible controller" | wc -l`

N=`expr $N3D + $NVGA - 1`

for i in `seq 0 $N`; do

mknod -m 666 /dev/nvidia$i c 195 $i

done

mknod -m 666 /dev/nvidiactl c 195 255

else

exit 1

fi

/sbin/modprobe nvidia-uvm

if [ "$?" -eq 0 ]; then

# Find out the major device number used by the nvidia-uvm driver

D=`grep nvidia-uvm /proc/devices | awk '{print $1}'`

mknod -m 666 /dev/nvidia-uvm c $D 0

else

exit 1

fi

In order to start this script at boot up, I created a systemd service. Create the file /etc/systemd/system/load-nvidia.service with this content:

[Unit]

Description=Load NVidia driver and creates nodes in /dev

Before=slurmd.service

[Service]

ExecStart=/usr/local/sbin/load-nvidia

Type=oneshot

RemainAfterExit=true

[Install]

WantedBy=multi-user.target

Now run these commands to enable the service:

# systemctl daemon-reload

# systemctl enable load-nvidia.service

You can verify whether everything is working by running the command

$ nvidia-smi

CUDA libraries

Download the CUDA libraries. For Debian, choose Linux x86_64 Ubuntu 18.04 runfile (local).

Then install the libraries with this command:

# cuda_10.0.130_410.48_linux --silent --override --toolkit

This will install the toolkit in silent mode. With the override option it is possible to install the toolkit on systems which don’t have an NVIDIA GPU, which might be useful for compilation purposes.

To make sure your users have the necessary binaries and libraries available in their path, create the file /etc/profile.d/cuda.sh with this content:

export CUDA_HOME=/usr/local/cuda

export PATH=$CUDA_HOME/bin:$PATH

export LD_LIBRARY_PATH=$CUDA_HOME/lib64:$LD_LIBRARY_PATH