Lees dit artikel in het Nederlands: De waarheid over gedecentraliseerde contact tracing apps zoals Coronalert

Too long to read? Skip to the conclusion.

Table of contents

- Table of contents

- DP-3T

- Apple/Google Exposure Notifications API

- Frequently Asked Questions – Debunking some myths

- Will these apps violate my privacy? Will the authorities know who I meet and where I am, what I do?

- How can I be sure that the app really works as promised and really does not collect and send private information?

- Why should I trust Google and Apple, who have a bad track record in privacy?

- Why does this app require Location setting to be enabled on my Android phone if no location information is collected?

- Will this app give me a warning every time an infected person passes nearby, resulting in many false positives?

- Are these apps useful if not everyone or at least a large part of the population downloads them?

- Will this drain the battery of my phone?

- Do I need to install another app when I go abroad?

- What do experts say about these apps?

- Conclusion

- Further information

- History of this article

Those that know me a bit, know that I think that privacy is very important. For this reason when they started talking about contact tracing apps for COVID-19 a few months ago, I thought this was an extremely bad idea. Having an app constantly tracking where you are and who you meet, is only something which you think is possible in undemocratic nations and dictatorial regimes. Something you expect in North Korea, China but not in European countries, where our privacy is supposed to be protected by the GDPR. And then what about the reliability of these apps? Bluetooth was never made for this. It would result in many false positives and negatives. No way I would ever install such an app.

That was at least my opinion a couple of months ago. Now my opinion about this matter has completely changed. Reading about decentralized solutions based on the Google and Apple Exposure Notification (GAEN) API and DP-3T, has completely changed my mind. I use the Italian contact tracing app Immuni and I am willing to use a similar decentralized app from any country where I am staying.

DP-3T

Decentralized Privacy-Preserving Proximity Tracing or DP-3T is an open protocol developed by different universities amongst others ETH and EPFL from Switzerland, KU Leuven from Belgium, Tu Delft from the Netherlands.

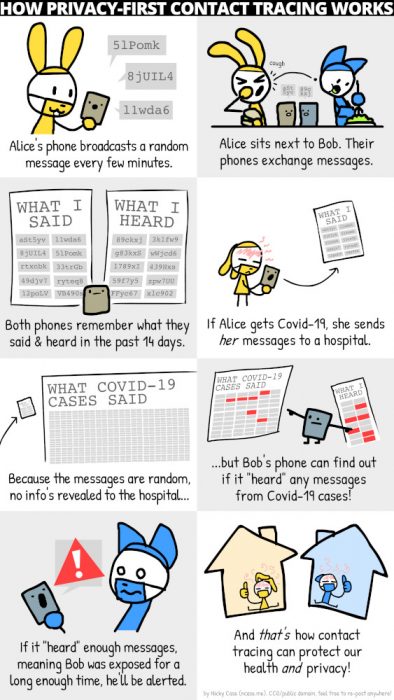

This comic shows a simple explanation of how it works.

Technically speaking, every day a new random seed (based on the seed of the day before) is created on every user’s phone, and this daily seed is saved on the phone for 14 days. From this seed are derived ephemeral identifiers (EphIDs). These EphIDs change several times an hour.

EphIDs are exchanged with other users of the tracking app by Bluetooth Low Energy (BLE) and every phone saves the EphIDs he received locally on the phone, together with the date and the attenuation of the signal, which can be used to estimate the distance.

When a user is tested positive for COVID-19, this user can, with the help of authorized health personal, upload the seed of the first day he was contagious, to a central server. All previous daily seeds are deleted from the infected user’s phone, and a completely new random daily seed is created, so that he does not become trackable in the future.

All other app users regularly download a list of all daily seeds of all contagious users from the central server and they can derive all EphIDs from them. The app compares all these EphIDs with the list of stored EphIDs which they met recently. Based on the amount of matching EphIDs the app can calculate how long the two have seen each other and based on the stored attenuation it can estimate the distance. If they were longer than a certain time within a certain distance, a warning will be given to the user that they were exposed to a contagious user, with instructions on what to do.

More details can be found in the DP-3T white paper.

Apple/Google Exposure Notifications API

The Google/Apple Exposure Notifications API (sometimes abbreviated as GAEN) is an API created from a joint effort by Google and Apple and enables the creation of decentralized contact tracing apps. This API, which is based on the principles of the DP-3T protocol described above, can only be used by apps approved by Google and Apple (only one per country, and created by official healthcare instances). Only decentralized contact tracing apps which do not collect any location information can get approved.

Apple by default does not allow background apps to use Bluetooth, except for approved contact tracing apps using this API. This means that on Apple iPhone, this API is the only way to create a reliable contact tracing app. Apps which don’t use this API, such as the StopCovid France app, have to apply work-arounds to keep the app waking up in the background, making them potentially less reliable and draining the battery more.

The DP-3T framework has since been modified to make use of the Exposure Notifications API.

Some of the apps currently available using the Google/Apple Exposure Notifications API are SwissCovid (Switzerland), Immuni (Italy), Corona-Warn-App (Germany).

The source code of the implementation of the framework for Android and iOS of the API were published the second half of July 2020.

Frequently Asked Questions – Debunking some myths

These apps appear to be the subject of deliberate fake news campaigns or at least emotional reactions resulting from a lack of understanding of how they work. Here I will try to address some questions.

Will these apps violate my privacy? Will the authorities know who I meet and where I am, what I do?

The applications based on the Google Apple Exposure Notification API do not know any personal information about the users: they don’t know your name, your phone number, where you live, or any other personal data. They also do not collect location data, so they don’t know where you are.

The only thing these apps do, is exchanging anonymous codes with other people in your neighbourhood. These codes change multiple times a day, making it impossible to keep tracking you.

The exchanged codes are only stored on your own phone and not in a central database. So there is no way for the authorities to know how many people and who you met.

Contact tracing apps usually apply even more extensive kinds of measures to protect security and privacy: for example dummy uploads are done in order to prevent network traffic analysis revealing a positive test, CA or certificate pinning to prevent MITM attacks, etc…

This is not a mass surveillance tool or Big Brother, as some try to let you believe.

How can I be sure that the app really works as promised and really does not collect and send private information?

These apps are usually open source, which means you can check the code to see how they work and what they do exactly. Even if you personally don’t have the knowledge to check the code, rest assured that there are enough experts taking a look at this, and will shout loudly when something is wrong. It has to be said: the only ones shouting loudly about these apps are politicians and activists who clearly have never looked at the source code, nor at the documentation. For example code reviews of Coronalert and Immuni have found these apps do live up to their privacy claims.

Here are some links to the source code of the different apps and their documentation:

- Immuni (Italy) – Documentation

- SwissCovid (Switzerland) – DP-3T Documentation

- Corona-Warn-App (Germany) – Documentation

- CoronaMelder (The Netherlands) – Documentation

- Coronalert (Belgium) – Documentation

On the issue tracker of these apps you can report problems and ask questions.

Also the source code of the Exposure Notifications framework, used by these apps, is available:

Why should I trust Google and Apple, who have a bad track record in privacy?

Actually Google and Apple don’t even need this API to track you. If you are running a phone running iOS or Android with Google Play Services, you actually already have much larger privacy problems than these decentralized, open source contact tracing apps. The same if you are using any of Facebook, Twitter, Instagram, TikTok, NetFlix, Spotify, FaceApp, Tinder. These do know your name, your location, your interests, your friends, and this without this API. Decentralized contact tracing apps, do know much less than any of these apps. This picture compares the different permissions SwissCovid, Facebook and Whatsapp can request.

That being said, there is now a way to run these contact tracing apps without using any Google services on your phone. The microg project now includes its own completely open source implementation of the Exposure Notification API. It can be installed on an Android distribution like LineageOS. It is confirmed that SwissCovid and Immuni work with microg’s implementatoin of the API, probably other apps do too. This way you can run these applications without having to rely on any of Google’s or Apple’s proprietary binaries.

Why does this app require Location setting to be enabled on my Android phone if no location information is collected?

To scan for nearby Bluetooth devices on Android the Location setting needs to be switched on because Bluetooth scanning can in theory be used to determine your location. For example this is used by navigation apps to determine your location in underground tunnels. In reality, apps making use of the GAEN API are not allowed to request your location. It can be verified in the source code of the app that at no time it determines your location. In Settings > Location > App permission you can still disable location access to apps. In Android 11, which came out in September 2020, it is noe required any more to have Location enabled on your device in order to use contact tracing apps based on the Exposure Notification API.

Will this app give me a warning every time an infected person passes nearby, resulting in many false positives?

The apps will only give warnings when certain conditions, usually defined by the government based on epidemiological data, are satisfied. For example, the Italian Immuni app will give a warning when someone is standing within a distance for 2 metres for at least 15 minutes. The SwissCovid app requires a 15 minute contact within 1,5 metre distance. Coronalert will show you also low-risk exposures in the app, however only in case of a high-risk exposure (at least 15 minutes within 1,5 – 2 m of distance) will result in a red screen and an explicit exposure notification. Only in this case a test and quarantine is recommended.

The distance is estimated from the attenuation of the signal. Unfortunately, attenuation will depend on lots of parameters, such as the the phone model being used and the direction in which it is hold, etc. Google adds a per device correction value to the attenuation so that values should be similar between different devices. The thresholds being used are based on experiments in different environments and can be modified in the future in order to lower false positives and negatives. Immuni for example uses an attenuation threshold of 73 dBm.

So no, contact tracing apps are not going to give you a warning when anyone who shortly passed nearby, is tested positive. Only when reasonable thresholds are exceeded, you will get a warning. Nevertheless, false positive and false negatives are possible. Authorities also realize this, and view the tracing app as a support tool for tracing, but not as a complete replacement for manual contact tracing. A contact tracing app also does not replace a diagnostic test.

Are these apps useful if not everyone or at least a large part of the population downloads them?

Contact tracing apps are certainly useful, even if only a part of the population uses them.

A highly quoted article from Oxford University states that if 60% of the population installs the contact tracing app, this can completely stop the epidemic. However what is often not quoted is the next part of the phrase: “even with lower numbers of app users, we still estimate a reduction in the number of coronavirus cases and deaths.” They estimate that that one infection will be averted for every one to two users.

So even much lower numbers than 60% are useful to help flattening or crushing the curve, saving lives.

Will this drain the battery of my phone?

By using Bluetooth Low Energy, battery consumption should be limited. Bluetooth Low Energy was created specifically for low energy consumption and is also being used to connect to smartwatches and wireless headphones. Battery consumption should be less than 5% in the worst case, if you otherwise did have Bluetooth completely disabled.

Do I need to install another app when I go abroad?

With support of the European Union, a gateway service has been built that allows the exchange of keys of infected persons between European countries. The 19th of October 2020 the Italian (Immuni), German (Corona-Warn-App) and the Irish app (StopCOVID Tracker) started using this. It is planned that other decentralized contact tracing apps of the European Union will connect to this this gateway too, for example the Belgian Coronalert will add support in November.

However note that keys can never be exchanged with the StopCovid France app because this one uses a centralized system instead of the decentralized DP-3T.

What do experts say about these apps?

First it’s important to repeat that DP-3T, and hence the Google/Apple Exposure Notifications framework based on it, were designed by academics from universities in different countries. Prof. Bart Preneel (KU Leuven), cryptographer, who contributed to the DP-3T framework, says that “for once, Google and Apple are on the right side of privacy“.

The British Information Commissioner’s Office (the national data protection authority) “believes the CTF (Google/Apple’s contact tracing framework) is aligned with the principles of data protection by design and by default, including design principles around data minimisation and security.”

In a report from prof. Douglas Leith (Trinity College Dublin), analysis of network traffic of contact tracing apps was done. He concludes: “We find that the health authority client apps are generally well behaved from a privacy point of view, although the privacy of the Irish, the Polish and Latvian apps could be improved.” They criticize the closed source nature of the Google/Apple Exposure Notifications framework though and the fact that Google Play Services sends private data to Google (something which happens on any Android phone having Google Play Services installed, irrespective of the presence and usage of this framework). Update 23 July 2020: the source code of the framework itself is now available.

The biggest criticism of the framework appears to come from prof. Serge Vaudenay, cryptographer of EPFL. He complains about the closed source nature of the GAEN, and the fact that some attacks are possible. There is an answer by the DP3T team to one of his papers. Update 23 July 2020: the source code of the GAEN framework itself is now available.

Conclusion

Forget all conspiracy theories and emotional objections by privacy activists who never looked at how these apps work: open source decentralized contact tracing apps making use of the Google/Apple Exposure Notification API are not Big Brother, no mass surveillance instrument. The protocol has been developed by academics specialized in security and privacy in IT and the source code of the apps can be verified by anyone. Extensive documentation describes the working of the apps and what is being done to protect the privacy of the users. By using anonymous ephemeral IDs and not collecting any location information, these contact tracing apps know less from you than the average social network app or your phone’s OS itself, so if you are worried about privacy, you have more important things to look at.

Contact tracing apps can be very useful in combating this epidemic, also if only a small part of the population is using them. For me it’s simply a matter of responsibility installing these apps: to protect others, to protect our society and economy and in the end to get protected myself by others using the app.

Further information

- DP-3T Documentation

- Immuni Documentation

- Google Exposure Notifications Internals for Android

- Apple Exposure Notification source code for iOS

- Tutto sulle notifiche di Immuni: Paolo de Rosa del ministero per l’Innovazione chiarisce i dubbi (Italian)

- Immuni, uno sguardo al codice sorgente delle app. Niente GPS né dati personali, l’allarme privacy è pretestuoso e esagerato (Italian)

- App Immuni, i giudizi degli sviluppatori sul codice del server: “Difficile fare di meglio” (Italian)

- Testing Apps for COVID-19 Tracing (TACT), Trinity College Dublin

History of this article

Update 23 July 2020: Added links to the source of Google and Apple Exposure Notifications framework – Added info about Belgian contact tracing app in development – Added link to picture comparing permissions requested by SwissCovid, Facebook and Whatsapp

Update 6 September 2020: Added question about Location requirement on Android phones

Update 19 September 2020: Added link to source code of Belgian Coronalert app – Added info about an Expsore Notifications API implementation in microg, enabling you to run these apps without Google services

Update 4 October: add information about EU gateway service

Update 19 October: EU federation gateway service in use by 3 apps